HTTPS

So my regular visitors (hi mum!) may have noticed the URLs on all of my domains changed a little recently. That’s because I have finally moved to HTTPS by default. There are many good reasons to do this (more secure when logging in to e.g. my blog, less snooping on people by bad actors, better search ranking, probably others), but the one that finally swung it for me was that I wanted to give Let’s Encrypt a go!

If you haven’t heard of Let’s Encrypt, it is a free service that issues SSL certificates (i.e. the bit that puts the S into HTTPS). They have a 90 day lifetime, and there is a bot that renews them automatically on the server.

I have to admit that it took me a little while to figure out how to use it properly – putting all the subdomains on the same certificate took me a while to get. But the actual install process if fairly straightforward as they’ve built a nice tool. Overall I think it is awesome. It has even auto-renewed the first lot I did already. Magic.

.uk ccTLD

Now that the .uk country code Top Level Domain is available I have added this to the list of addresses I own. This means that these pages now live at www.ollyandbecca.uk (i.e. the .co has gone), saving you three whole keystrokes. The same is true for faji.uk too.

Toilet troubles

A day or two after we moved in we both started to think our bathroom really smelt of stale wee. Now our bathroom had some rather lovely cork floor tiles in it, so the assumption was that the smell was a residual from those. A few days and a lot of scrubbing later we realised the toilet was leaking. Moreover, it was leaking from the soil pipe. More moreover, it was leaking from behind a boxed in part of the bathroom. Joy. A few hours later I had managed to pull all the floor tiles up to find the leak had made it a disturbing distance away from the toilet. Double joy. A bit later I smashed the boxed in part away and found the leak. It was kicking out about half a shot every time the toilet flushed. The thing is, where it was leaking from makes me think it’s been leaking for years. Yummy.

Much bleach and a plumbers visit later we had a functioning toilet once more. Luxury.

Power to the people

One of the things we hadn’t really appreciated was that our flat had two analogue electricity meters – one day time and one off peak. Coupled with this was a rather retro mechanical clock that turned the peak meter on and off. So far that seems reasonable enough – a digital clock would probably have been the size of our flat in the 60s. Where it began to get a bit frustrating was when we realised the immersion heater is wired into the off peak meter. This meant the hot water came on all night, and we had no way of turning it on ourselves. Ever. The obvious solution was to get our electricity company to come and sort it out. Simple. It would appear that the folk at EDF had absolutely no idea what we were talking about when describing the meter. It took several sessions of Becca shouting at people before they understood what she was talking about. In fact EDF have sent a letter saying they are processing our complaint – what complaint? That’s just how she talks to people!

Anyway, they have finally come and taken the off peak meter away and wired it all into the main meter, so we can now have hot water whenever we want. Well about an hour after we want it. Luxury.

The laundry and the tramp

One if the things I quite like is to have clean clothes. Our new place didn’t have the fittings for a washing machine installed, or any real way to dry clothes. This was a problem. So in the interim we thought we would give a launderette a go, how hard could it be?

The first problem was that launderettes don’t seem to have websites, or be listed anywhere googlable. We eventually found one not too far from us (a car drive still though).

When we went the launderette was unmanned, so we had to figure it out ourselves, I’ve used a washing machine like a thousand times so it should be easy enough. No. It is not. We filled the machine up, picked a setting (this was a random choice since the labels were non-existent), and stuffed it full of cash. Then it started leaking. Ummm. I managed to mega force the door shut so that stopped that problem.

After five minutes of watching the clothes go round we decided that launderettes should have bars, but since this one didn’t that we should go find one elsewhere. But how to know when to come back? This is where I struck upon one of my brighter ideas – I’ll go and ask the chap sitting at the other end of the the launderette how long a washing cycle takes. So I did. And so the chap looked at me, informed me that he had no idea. It very quickly became apparent that he was in fact a tramp, of course he doesn’t know how long it will take. The bar was going to have to wait.

Fifteen minutes later the next dilemma presented itself – the washing finished. Clearly we had picked the ‘cursory attempt at washing’ cycle in our random program picking. Alas given the exuberant prices for doing laundry (we had already spent £8) we had no choice but to accept our fate as wearing only slightly cleaner clothes for the next week.

It was rather soon after this that we bought, and installed, a washer-dryer in our flat (an almost hobo-free zone).

Our new house

As some of you may already know; we have bought a flat. It’s all part of our relocation to Bristol for work (which I shall write about at some point). It does give me a (good?) excuse to revive the blog I wrote about our last place.

It’s a 1960s flat in the Clifton area of Bristol, so it is a short walk to work in the morning. I think it is fair to say that it is a ‘project’, all the usual cosmetic stuff needs doing, the kitchen and bathroom both need doing, the wiring needs some TLC, the garage needs water tightening, and it needs some heating. In fact it might just be easier to knock it down and start from scratch.

You can have a look at some photos of the flat before we moved all our stuff in. Needless to say it looks a lot more crowded now.

BRISSkit

I’ve been working on a new project at work for a couple of months now, it’s another of those informatics projects that I seem to be doing more of nowadays. Obviously much time was spent coming up with the acronym – Biomedical Research Infrastructure Software Service kit. It is based up at the Glenfield Hospital with the Cardiovascular Biomedical Research Unit.

The main idea behind the project is to plug together a bunch of software packages and stick them on the cloud. We will then let other researchers come along and have an instance of our software stack at the click of button (well a few key stokes really). The obvious benefit being that it will take a few minutes to set up, as opposed to the few years it has taken to get the software to where it is now, and it will be centrally maintained – so no specialist IT skills will be needed.

We are currently going down the route of putting it on a VMWare backed cloud infrastructure, so my main responsibility (on account of being a linux geek) is to get it all into the cloud. Then we need to be able to monitor and manage it, so that’s in my domain also.

As you’d expect, we have a website and blog that we update on an irregular basis.

HALOGEN goes live!

For the last six months or so I have been working on a project called HALOGEN, this was a venture to bring together various different geospatial data sets into one unified database. By doing this we can start to ask cross data-set queries – something that you certainly couldn’t do when one source is in an Excel file at one university and the other in an Access database at another!

One of the advantages of this approach is that we can then stick a web front end on the database and let the general public look at the data. It is this bit that we launched yesterday. If you head on over to halogen.le.ac.uk and do a query then you should be able to find the derivation of your favourite English place name, or where your ancestors lived during 1881, or even what and where treasure has been found!

Maps

Becca and I have bought a couple of antique maps of places we have lived over the years, but we have never got around to getting them properly framed. Well we finally did, and they look awesome!

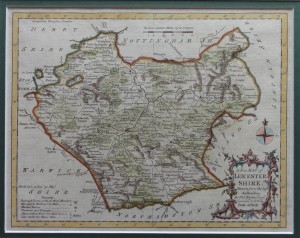

This is a 1764 hand coloured engraving of Leicestershire by Thomas Kitchen.

This is a 1806 hand coloured copper engraving of of the Environs of London by R. Phillips of New Bridge Street.

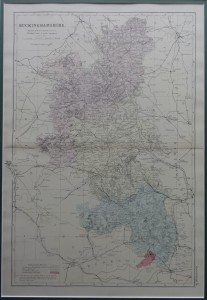

This is a c1880 ordnance survey map of Buckinghamshire by G. W. Bacon. Those sharp witted amongst you will know that we used to live in Milton Keynes, which wasn’t invented (in its current form) until 1967, but Milton Keynes village is just about visible if you know where to look.

We got them framed by out local map shop. Just need to get a nice one of Norfolk and Sri Lanka and we will be done.

ArcGIS 9.3 -> MySQL jiggery pokery

I am finishing up on a project at work called HALOGEN, it’s a cool geospatial platform that I’ve been developing to help researchers store and use their data more efficiently. At its core, HALOGEN has a MySQL database that stores several different geospatial data sets. Each data set is generally made up of several tables and has a coordinate for each data point. Now most of the geo-folk at work like to use ArcGIS to do their analysis and since we have it (v9.3) installed system-wide I thought I would plug it into our database. Simple.

As it happens the two don’t like to play nicely at all.

To get the ball rolling I installed the MySQL ODBC so they could communicate. That worked pretty well with ArcGIS being able to see the database and the tables in it. However, trying to do anything with the data was close to impossible. Taking the most simple data set that consisted of one table I could not get it to plot as a map. The problem was the way ArcGIS was interpreting the data types from MySQL; each and every one was being interpreted as a text field. This meant that it couldn’t use the coordinates to plot the data. I would have thought that the ODBC would have given ArcGIS something it could understand, but I guess not. The work around I used for this was to change the data types at the database level to INTs (they were stored as MEDIUMINTs on account of being BNG coordinates). I know this is overkill, and a poor use of storage etc, but as a first attempt at a fix it worked.

Then I moved on to the more complex data sets made up of several tables with rather complex JOINs needed to properly describe the data. This posed a new problem, since I couldn’t work out how to JOIN the data ArcGIS side to a satisfactory level. So the solution I implemented here was to create a VIEW in the database that fully denormalized the data set. This gave ArcGIS all the data it needed in one table (well, not a real table, but you get the idea).

If we take a step back and look at the two ‘fixes’ so far, you can see that they can be easily combined in to one ‘fix’. By recasting the different integers in the original data in the VIEW, I can keep the data types I want in the source data and make ArcGIS think it is seeing what it wants.

And then steps in the final of the little annoyances that got in my way. ArcGIS likes to have an index on the source data. When you create a VIEW there is no index information cascaded through, so again ArcGIS just croaks and you can’t do anything with the data. The rather ugly hack I made to fix this (and if anyone has a better idea I will be glad to hear it) was to create a new table that has the same data types as those presented by the VIEW and do an

INSERT INTO new_table SELECT * FROM the_view

That leaves me with a fully denormalised real table with data types that ArcGIS can understand. Albeit at the price of having a lot of duplicate data hanging around.

Ultimately, if I can’t find a better solution, I will probably have a trigger of some description that copies the data into the new real table when the source data is edited. This would give the researchers real-time access to the most up-to-date data as it is updated by others. Let’s face it, it’s a million times better than the many different Excel spreadsheets that were floating around campus!